In this article, I will show basics of geometry shader – useful and easy to use an element of the rendering pipeline.

Geometry shaders in rendering pipeline

Here’s a simple diagram. I marked with orange the parts which we can modify using shaders (click to enlarge).

Our geometry shader goes into action after vertex and tesselation shaders, and inside we can use data passed from these previous stages. Inside our program we will set out variables and emit vertices – all this data will be passed to the vertex shader as if geometry shader was not existent. Let’s see it in action.

First geometry shader

This stage operates on shapes, not individual vertices. It’s a great opportunity to render face normals.

The difference for this stage is that we specify types of input and output primitives. That means geometry shader can create different “shapes.” For output, we say how many vertices we emit (e.g. imagine getting a point and generating a triangle strip making a cube around this point).

For face normals, we calculate a middle of each face, then the normal vector. The last step is to emit vertices:

Vertex data comes in the array of structs called gl_in, you can use standard array access syntax. The number of elements is dictated by the primitive we chose.

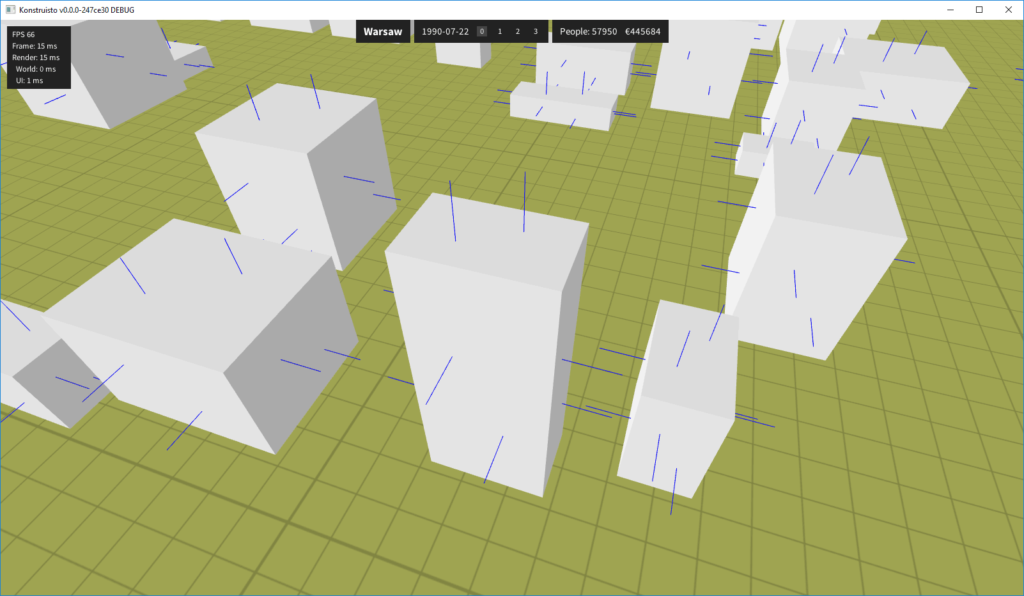

We have to color lines in fragment shader and here is the final result:

Notice, that we have to run two different programs to render buildings and normals since single shader can output only one type of primitives. The handy thing is, we can use the same buffer object because we generate normals from the same triangles.

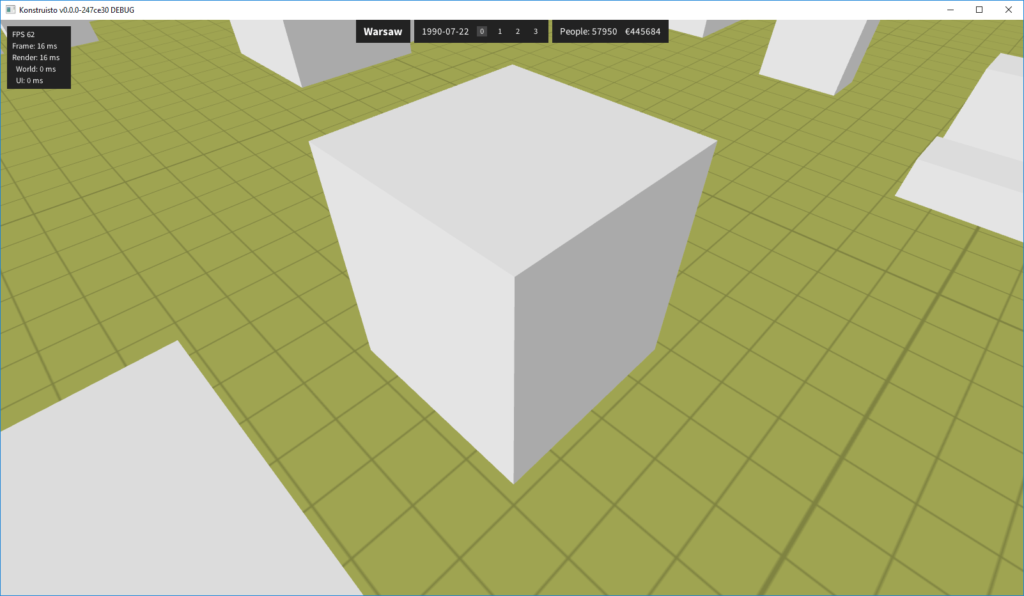

Flat shading

We can also define out variables inside geometry shaders. OpenGL exports the values of out variables when we call EmitVertex(); – that means you can calculate something (like lighting) once and doesn’t have to set it every time vertex outputs. Fragment shader interpolates variable values as usual.

See below how three perpendicular walls have different colors. Every wall consists of two triangles but you can’t tell the difference between triangles.

Further reading

- Official wiki on Geometry Shaders

- Article on geometry shaders from LearnOpenGL.com

- Really old (mentions fixed pipeline) official article, which links to papers on lighting models

Good article! Always nice to read something on modern 3d graphics

Hi, nice art. How much time you needed to learn well shaders?

Honestly, I wouldn’t say I know shaders well. 😉

GLSL is similar to C/C++ and there is very little new syntax to learn. If you know some basics of algebra you should be able to do nice things under a week.